The lack of transparency and understanding around AI isn’t just annoying – it has consequences. For those using it and those reading what has been produced by it.

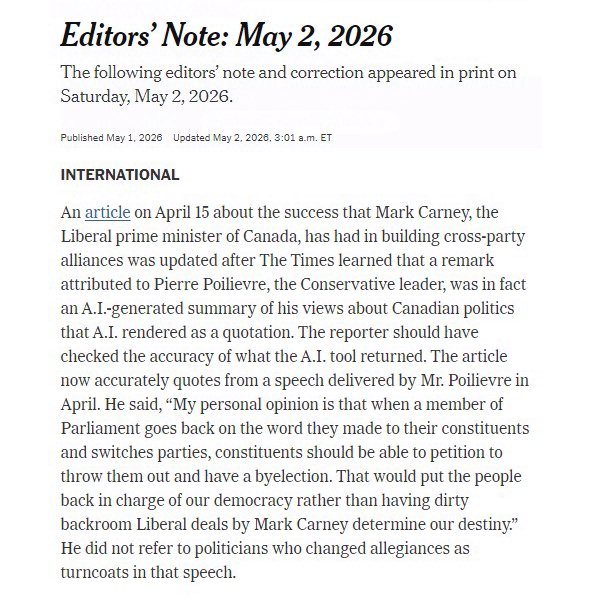

TLDR: A piece appeared in the New York Times with a quote attributed to Pierre Poilievre. The quote was made up by AI, no one bothered to check the quote. The piece ran and now the New York Times has published a correction saying ‘oops – the reporter should have checked’ and I think that’s a bit weak.

The NYT correction states says “the reporter should have checked the accuracy of what the A.I. tool returned” – which I suppose is their way of painting the incident as a simple verification lapse – but IMO that underplays what happened. There’s a HUGE difference between (a) using AI to summarise background info or using it to help structure notes and (b) allowing it to attribute quote. Quotes aren’t just details or info. They are evidence. If the reporter didn’t check, the problem isn’t AI. The problem is that the reporter outsourced attribution, a foundational part of their job – the part of the job on which public trust is built – to a tool that hallucinates. The second problem is that the reporter didn’t bother to check. The third problem is that the editorial workflow had room for the previous two problems to go un remarked until after publication.

That is all down to people. Not the tool

Of course, when it comes to light, the NYT is momentarily embarrassed but the ripple effect lives on – it erodes the public trust in what for decades was a paper of record. I should probably say ‘erodes even further’ given the decline in quality at the NYT from its once lofty perch of excellence. It also hands every bad-faith actor a glaring, very public, high profile citation for “even the NYT uses AI to make up quotes.”

We all know that an article, when published, will reach exponentially more people than the correction. In this case, it is entirely possible that the correction will become the story and that story will have a far longer tail than the original piece. Not because the robot got it wrong but because the people, the editorial guardrails and the workflow that one might expect to be in place at such an organisation failed – and they seem to have shrugged it off. Maybe a review of their ‘Principles for Using Generative A․I․ in The Times’s Newsroom’ page is in order.